LiDAR – shining a light on the facts – part one

Undoubtedly a game-changer for sensor-based applications, Light Detection and Ranging (LiDAR) technology has also been the victim of many myths and misconceptions. This article, the first of two, will address and put straight a number of these inaccuracies and fictions.

LiDAR and its applications have become more visible as the world embraces smarter, more efficient technologies. Though this increased exposure brings with it a proliferation of myths about LiDAR, including its efficacy, relevance and performance, myths that this blog will debunk and put forward the facts.

Myth – LiDAR uses complicated technology

LiDAR sensors are complex devices consisting of numerous components, however, the underlying working principle is simple – the time of flight (ToF) method. ToF is a principle similar to echolocation in bats or microwaves in radar.

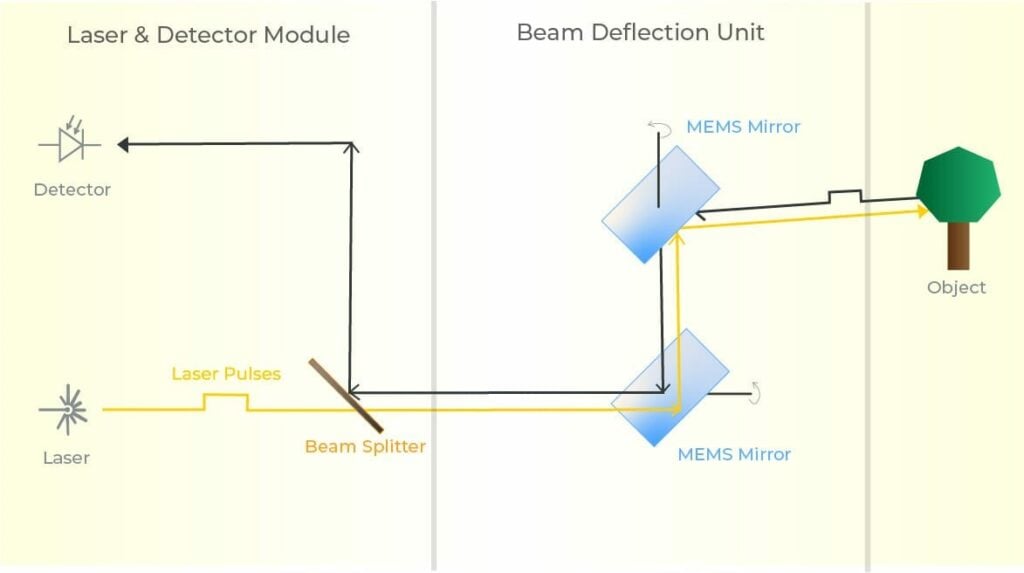

Breaking down the LiDAR sensor into its components illustrates how simple the technology is. The sensor consists of a laser, detector and beam deflection unit.

The laser emits pulses which are deflected by the beam deflection unit onto the environment, the reflected light from which is detected by the detector. The time between the emission and return of the laser pulse is then used to calculate precise distances.

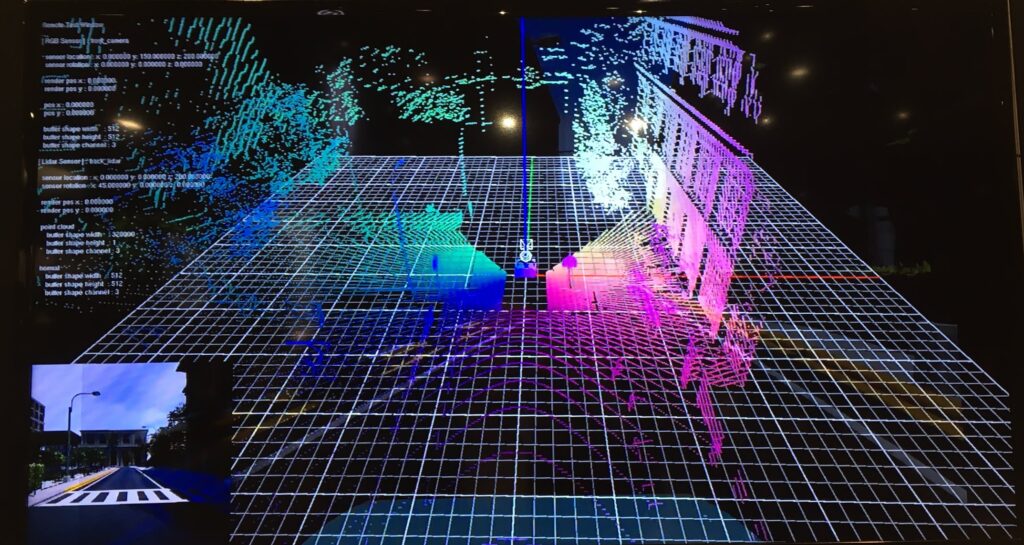

This process is repeated thousands or millions of times per second to generate a spatially accurate, real-time 3D map of the environment. The precise data contained in the map, or pointcloud, can be analysed and utilised in numerous applications, such as autonomous vehicles.

Take a deeper dive into how LiDAR works

Myth – LiDAR is unsuitable for autonomous vehicle applications

Owing to its ability to capture accurate 3D data, regardless of lighting conditions or weather, LiDAR sensors are an essential element for the successful and safe realisation of autonomous vehicle applications.

Elon Musk’s widely reported, and erroneous, disregard for LiDAR sensors as a solution for autonomy has led to the spread of much misinformation and factual inaccuracies. He claims that LiDAR sensors are rendered redundant by cameras and complementary intelligent algorithms.

Although cameras are required for colour vision and use different image recognition techniques, the fact that they only capture 2D data means that they can be tricked by visual illusions and are prone to misjudge distances. These shortcomings have a huge impact on reliability and safety, which have been illustrated by several serious incidents.

In contrast, LiDAR reliably and accurately captures data in 3D, identifying precise distances and dimensions, eliminating misinterpretation.

Adding accurate 3D LiDAR data to a platform or system plugs the considerable gaps left by cameras, situations such as taking time to adjust the lighting after exiting a tunnel or detecting objects hidden by obstacles.

Additionally, although the 2D annotations generated by cameras may seem accurate enough to train autonomous vehicle algorithms, they are littered with imprecisions, reducing the accuracy of the machine learning (ML) models. Thus, the platform’s ability to perceive, predict and plan is impinged significantly. The machine learning capabilities facilitating autonomous driving need to be scalable and address the ‘long tail’, meaning that it is not sufficient to accommodate 95% of the scenarios faced by vehicles on the road. The ML-based autonomous driving capabilities also need to be trainable for the challenging 5% of potential cases, while continually improving performance, requiring enormous amounts of training data for a camera-only system.

LiDAR provides more predictive ML models while generating higher accuracy training data, making it essential for reliable and robust autonomous driving systems.

Myth – Other sensors can do the job of LiDAR

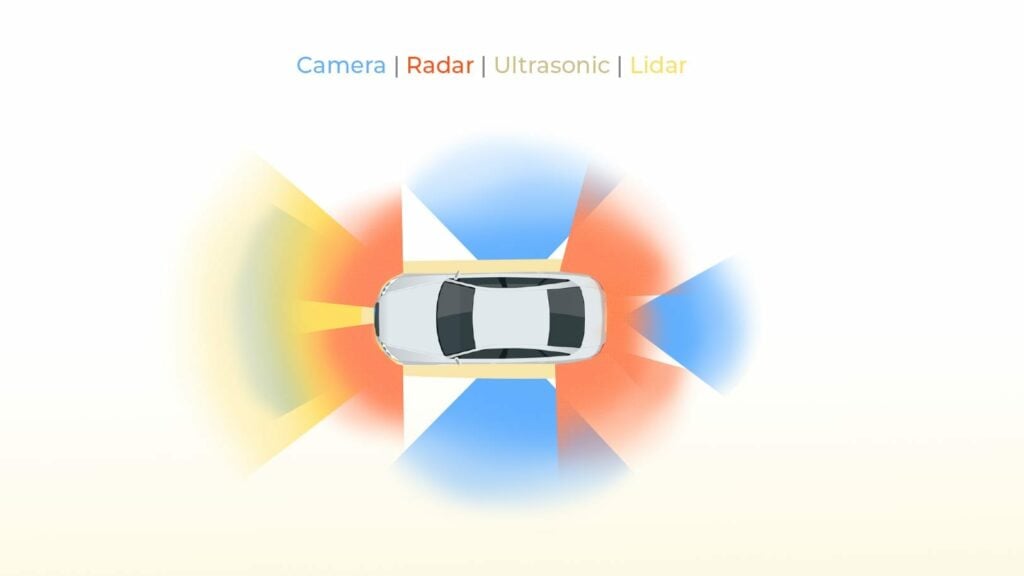

A common myth about LiDAR is that it can be replaced by camera or radar sensors, a misconception emanating from a lack of understanding of how these sensor technologies classify objects in different ways. To appreciate these sensors’ complementary functions it is necessary to understand the different capabilities of these technologies, along with the types of data that they produce.

Cameras display a two-dimensional visual representation of the world, providing greyscale or colour information, texture, and contrast data. Image recognition software is required to analyse this data for deeper application. As cameras use a passive measuring principle, objects need to be illuminated for detection. To create 3D images multiple cameras and high computing capabilities are required.

Radar sensors measure 3D information, excelling in determining the distance and speed of objects with high precision. However, the low resolution of radar means that they cannot precisely detect objects at cm-scale, or classify objects.

LiDAR identifies points in three dimensions, creating point clouds from the sensor data. Objects are precisely detected based on the size of the clusters and can be divided into categories, such as pedestrians, cars, or buildings.

This gathering of highly detailed, reliable 3D information addresses the shortfalls of other sensor technologies, with LiDAR data standing out thanks to the detecting and categorising of objects. Data from cameras can then be used for deeper analysis and illustrative representation. While distance and speed data from radar can be verified by LiDAR to improve accuracy.

It’s therefore clear that sensor-based applications are best served by an array of different sensor technologies – integrations of cameras, radar, LiDAR and more.

Discover more about sensors for autonomous systems

Myth – challenging environmental and weather conditions impair LiDAR performance

For cameras to operate they require a certain level of ambient lighting, meaning that in vehicles they will only see as far as the range of the headlights. LiDAR sensors, however, are capable of ranges of several hundred metres, regardless of the lighting conditions – this is down to the infrared laser beams of LiDAR, as opposed to the reliance of cameras on visible light. Consequently, an autonomous vehicle equipped with LiDAR can drive as well in full dark as full daylight, even with no headlights.

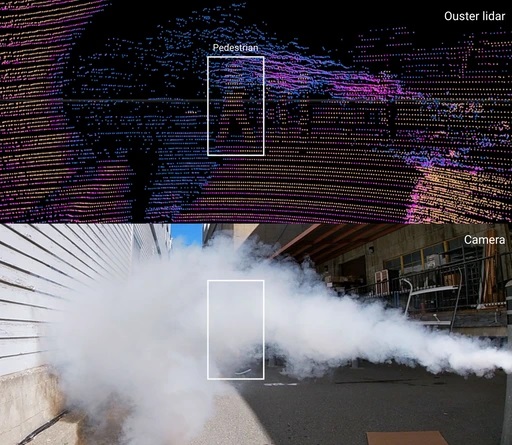

LiDAR also offers a clear advantage in challenging environmental conditions, such as rain, snow or fog.

LiDAR sensors perform better than cameras in the rain because of their large optical beam, allowing light to pass around obstructions – eg rain droplets – on the sensor mirror. This means that LiDAR’s range is largely unaffected. In the case of cameras, however, as the size of a pixel is far less than that of a raindrop, the FoV is obscured.

The large optical beam also enables LiDAR to detect multiple returns from different ranges, processing the one with the strongest signal. This is beneficial in snowy conditions, for example, in which reflections from snowflakes are disregarded, processing only the return from the hard object in the background. Without ML algorithms, Cameras cannot distinguish between snowflakes, a wet lens, or a hard object, combining all returns to create a distorted final picture.

LiDARs also have shorter exposure times or faster shutter speeds (a millionth of a second) than cameras (a thousandth of a second), meaning a raindrop is detected in its original shape, rather than as a streak across multiple pixels.

Although heavy fog can affect LiDAR performance, it still provides significantly greater valuable data, operating at longer ranges, than cameras.

Myth – LiDAR sensors are expensive

The introduction of microelectromechanical systems (MEMS)-based LiDAR sensors has made them more cost-effective and easily producible.

Previously, the only LiDARs available were spinning sensors, which were expensive, bulky and unable to be manufactured in large volumes. A hangover from this time is the erroneous perception that LiDAR hardware prices are still high. With the introduction of MEMS-based LiDAR, the game has moved ballparks – MEMS components are produced from silicon, making them highly scalable and highly cost-efficient.

Combined with other standard components, and robust technology without the need for regular maintenance, solid-state LiDARs are becoming far more affordable. Solid-state sensors can be secured for just hundreds of pounds now, rather than the thousands that would’ve been required not so long ago. With this downward cost trend continuing, 3D sensing has never been more accessible.

Myth – MEMS-based LiDAR sensors are not high-performance

LiDAR manufacturer, Blickfeld’s sensors feature proprietary MEMS mirrors and coaxial design, enabling a high proportion of photons to be directed onto the photodetector. This elevates sensor performance to a measurably high level.

It’s widely acknowledged that MEMS-based sensors benefit from high scalability and low cost. Though it is also widely thought that the detection ranges of these LiDARs are limited – another misconception. This false impression derives from the fact that MEMS mirrors are very small and that usually the greater the size of the mirror, the greater the area of collection and, consequently, the longer the range. However, the 10mm+ dimensions of the MEMS mirror that Blickfeld has developed means that a higher proportion of photons are directed onto the photodetector.

Another advantage of the Blickfeld LiDAR sensors is the coaxial design. This allows highly effective spatial filtering, meaning that photons are collected precisely from the direction they were sent, therefore minimising background light and enabling a high signal-to-noise ratio. Which further enhances the range capability.

Heade over to the second part of this article, where we will expose, explore and explain more common misconceptions about LiDAR technology.

Level Five Supplies – your LiDAR partner

Level Five Supplies offers a comprehensive portfolio of LiDAR products from some of the world’s leading manufacturers ensuring that you can find the perfect sensing solution for your application.