LiDAR – shining a light on the facts – part two

LiDAR and its applications have become more visible as the world embraces smarter, more efficient technologies. Though this increased exposure brings with it a proliferation of myths about LiDAR, including its efficacy, relevance and performance, myths that this blog will debunk and put forward the facts.

Myth – Like cameras, LiDAR sensors raise privacy issues

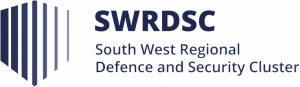

Unlike cameras, LiDAR doesn’t record any colour information, capturing only 3D distance data to create the pointcloud. This generates an anonymised picture of the environment, protecting privacy.

Hand in hand with the growth in sensor use has been increasing concern around privacy. This is exemplified by smart city projects. Across the world, the data collection aspect of these ventures has proved controversial, to the extent that the European Union is considering banning facial recognition technology in public spaces until such time as it is understood and can be regulated.

The main concern is that of the sensors capturing and storing pictures of people, and then using the data for identification via facial recognition. As the debates around using surveillance and identification applications rage on, the anonymous nature of LiDAR data means that it is perfectly placed to address any violations of privacy.

With its ability to reliably and accurately detect, classify and track pedestrians, vehicles and objects in an anonymised fashion, LiDAR is the ideal sensing solution for privacy-sensitive applications, such as security and smart spaces.

Myth – iPhone LiDAR can be used in real-world applications

The type of LiDAR sensor integrated with recent generations of iPhone, with its limited scanning range and low resolution is far from suitable for applications in which a conventional scanning LiDAR is required.

Apple made headlines with the release of the iPhone 12 Pro and iPad Pro due to the fact that the devices would have LiDAR sensors onboard.

iPhone LiDARs create small-scale 3D depth maps of objects, people, and surroundings by emitting waves of light pulses in a spray of infrared dots. The dots measure the distance between each other, creating a field of points, and generating a mesh of dimensions. Fundamentally, this is just an update on the previously used TrueDepth camera Face ID technology.

But do Apple’s LiDARs measure up with conventional LiDAR technology?

iPhone LiDAR uses flash illumination, rather than scanning, meaning that the field of view (FoV) is illuminated using a single pulsed wide diverging laser beam. Conventional scanning LiDAR sensors, however, use collimated laser beams to illuminate a single point at a time within the FoV.

Range is the most marked difference between scanning LiDAR and iPhone LiDAR. Commercial applications demand far higher performance in both range and resolution than the Apple LiDAR offers. For example, the Blickfeld Cube 1 has a maximum range of 250 metres, while the iPhone LiDAR can only measure between 5 and 10 metres.

When it comes to sensor resolution, a typical scanning LiDAR like Cube 1 scans upward of 500 lines per second, generating thousands of data points, which creates a high-density pointcloud. Whereas the Apple LiDAR apparently measures in the region of 500 points per frame, offering a much lower resolution.

While the iPhone LiDAR does improve the camera focus speed and accuracy in low lighting, at present it is clearly not suitable for large-scale applications such as autonomous vehicles or HD mapping.

Myth – LiDAR is not eye safe

A common myth about LiDAR is that it is not safe for human eyes. In fact, LiDAR sensors are manufactured to the Class 1 eye-safe (IEC 60825-1:2014) standard, ensuring eye safety.

Eye safety for LiDAR is not only based on the wavelength of the laser but on a combination of factors. A major element that contributes to the safety rating is the peak power of the laser, which determines the sensor range for a particular wavelength. Eyes are generally more sensitive to 905 nm wavelength lasers – therefore, this type of laser is used with lower peak power to ensure that it is eye-safe.

Whereas LiDAR sensors operating on the 1550 nm wavelength range safely employ higher power thresholds, resulting in greater ranges than 905 nm lasers while remaining eye-safe. This is due to the cornea, lens, and aqueous and vitreous humours absorbing wavelengths greater than 1400 nm, mitigating the risk of retinal damage at longer wavelengths.

These combinations of peak power in relation to wavelength are defined by the Class 1 eye-safe (IEC 60825-1:2014) standard. All manufacturers of lasers of wavelengths between 180 nm and 1 mm must abide by this standard to ensure eye-safe operations.

There has been a view that in multiple LiDAR scenarios – such as road intersections, which see sensors emitting waves at the same wavelength and phase – there is the possibility that these could combine, creating a higher energy laser that is not eye-safe. In theory, these lasers could constructively superimpose and raise the peak power (amplitude), meaning that the amplitude of the pulses can increase, potentially breaking the eye-safe limit.

While this may be true in theory, in reality, it is all but impossible. The LiDAR sensors would need to emit laser pulses with numerous factors perfectly aligned, factors such as pulse duration, divergence angle, and the exposure direction in relation to the observing eye for this theoretical ‘high-energy laser’ to be generated. All of this means that it is extremely unlikely that multiple LiDAR beams would overlap at a single point in space and time.

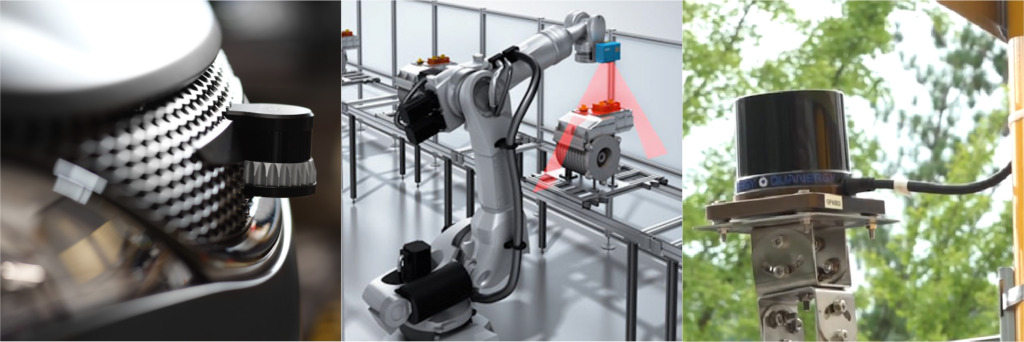

Myth – There are few applications for LiDAR sensors

This myth is swiftly debunked, as per our 100 applications of LiDAR article. From agriculture and autonomous vehicles to security and smart cities, there are abundant applications for LiDAR, and that number is growing as more and more industries realise the power and potential of LiDAR technology.

Level Five Supplies – your LiDAR partner

Level Five Supplies offers a comprehensive portfolio of LiDAR products from some of the world’s leading manufacturers ensuring that you can find the perfect sensing solution for your application.