Vision for autonomous navigation systems

GPS signals – provided by positioning systems such as those produced by Inertial Sense – are essential to the majority of autonomous navigation systems so that they can determine their position and direction of travel. However, this becomes problematic when GPS is not available. To address this, the Institute of Electrical and Electronics Engineers (IEEE) recommends Simultaneous Localisation and Mapping (SLAM) to augment or replace GPS signals. The use of SLAM enhances navigation, promoting more accurate location assessments for your systems.

Visual SLAM (vSLAM) uses a photonic sensor to provide information to the autonomous platform, directing their movements more accurately. This process offers the highest degree of accuracy for autonomous robot navigation systems operating in real-world environments.

The value of vision

The majority of humans have little or no experience of navigating our environment without visual cues, unlike many autonomous navigation systems that rely on GPS, or similar positioning solutions, to provide information on their location without the ability to ‘see’ the environment. These systems operate well in environments that do not change or that do not contain any obstacles. However, if the terrain is challenging or objects are in motion within it, the ability to detect these obstacles is essential.

The mechanics of vision

The vision of autonomous navigation systems is not the same as the vision of humans. Different types of sensors are used to determine the positioning and surroundings:

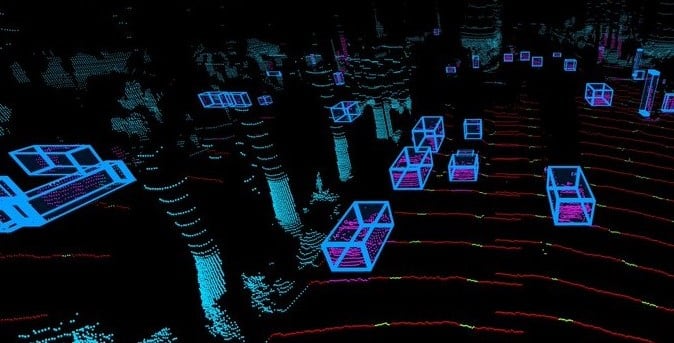

These active sensors create detailed 3D images (point clouds) of the environment by emitting and receiving lasers. LiDAR sensors accurately measure distances to objects, also offering panoramic fields of view, typically achieved by rotating an internal mirror system to project and capture the light. New advancements in LiDAR technology have enabled the development of solid-state sensors.

Typically used to monitor blind spots and other areas for improved control and safety for the autonomous system. Radar sensors send pulses of harmless microwave radiation in a wide spread, detecting pulses that have bounced off hard objects, and is not affected by weather conditions.

Short-range sensor using high-frequency sound to measure the distance to an object, delivering information about obstacles close to the autonomous robot or device. Though low cost, ultrasonic sensors can be prone to noise in the data from the literal noise of the environment.

Infrared sensors

A low-cost useful addition to autonomous platforms for tracking and resiliency. Because infrared is not visible to humans, IR flashlights can be added to platforms that need to operate in the dark, without flooding the area with visible light. These can also be made or purchased fairly inexpensively and have a wide adoption community for development.

Along with GPS signals and their information, each of these sensors can help autonomous navigation systems build a 3D image of the world. While these systems don’t ‘see’ in the same way that humans do, they build a virtual representation of the environment, acting on the information this representation provides to move about without running into objects. This allows the vision of autonomous navigation systems to serve the same purpose as the physical vision experienced by humans and animals.

The need for vision

Redundancy is a key ingredient to a robust navigation system. As GPS signal can fail if the platform passes beneath cover or is subject to interference from an ever-growing number of sources, this mustn’t be the only method of navigation on-board. GPS denied areas can have an adverse effect on the sensor fusion algorithm The addition of a vSLAM solution provides redundancy in the navigation to map out and follow routes, even if GPS is lost.

Additionally, a system that uses only GPS signals has little chance of avoiding obstacles on the ground or in the air, potentially resulting in damage and consequent downtime for the robot, drone, or autonomous system. Adding visual sensors to the mix enables the platform to “see” its obstacles, allowing even greater autonomy in avoiding damage and navigating the terrain or airspace around it more effectively.

An IEEE Sensors Journal article from January 2021 indicates that vision-based autonomous navigation methods offer greater flexibility in changing environments; increasing the ability of these systems to learn and adapt while avoiding collisions with stationary or moving objects.

Procedia Computer Science published an article in 2020 outlining how vision assists in detecting and classifying objects for autonomous vehicles and other systems. This highlights the importance of vision for autonomous navigation when safety is a significant factor.

Increasing accuracy and effectiveness

Arguably, the key attribute of visual sensors for autonomous systems is their ability to back up, and even supersede, information coming in from GPS and other systems. Robots and drones can trust their own artificial eyes to avoid collisions on their own. This is an essential way in which the vision of autonomous navigation systems can increase accuracy and effectiveness in real-world scenarios.

It’s clear then that vision sensors and perception software are essential elements for safety-critical systems and those that will operate in unfamiliar or challenging terrain. Autonomous platforms depend on vision to provide the added information needed to navigate in GPS denied environments, avoid obstacles, and minimize the risk of collisions. As an addition to GPS and a learning tool for intelligent systems, vision is key to achieving the best results from your autonomous devices and systems.

Level Five Supplies offers a comprehensive portfolio of LiDAR, sensors and positioning products from some of the world’s leading manufacturers, ensuring that you can find the perfect sensing solution for your application.