LiDAR: How Does It Work?

Anyone following the autonomous vehicle revolution will no doubt be aware of LiDAR technology, the ubiquitous hardware used in autonomous vehicle R&D. While it is viewed by many as an essential part of developing driverless cars, 3D sensing technology has been around since the 60s.

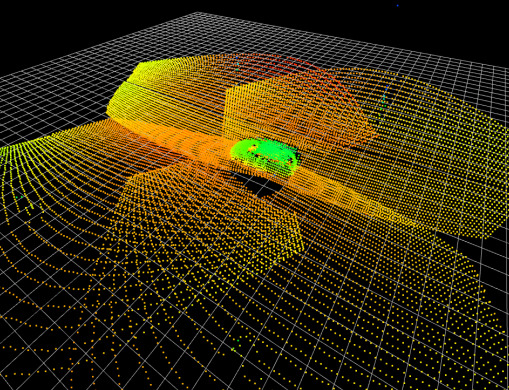

LiDAR (short for Light Detection and Ranging) units send out infrared light beams and interpret the signals that bounce back from nearby objects, forming a pixel-type view of the world known as a point cloud. The level of accuracy can vary from sensor to sensor depending on the power, configuration and resolution of the unit.

So how does LiDAR work and why is it so important in the development of autonomous vehicles?

LiDAR basics

As the name implies, LiDAR is an active 3D sensor, sending out a signal from an emitter to be interpreted by a receiver. Its applications span many industries: at present, LiDAR is most often used for surveying, urban planning, countryside management and conservation. It’s used to create a 3D map of its surrounding environment, updating constantly.

While early LIDAR technology was awkward and sluggish, the evolution of smaller, faster electronics means it’s now much more easily integrated with today’s systems. In automated vehicles, LiDAR units are often combined with other sensors and cameras to monitor the vehicle’s environment in great detail, in a process called sensor fusion. The resulting 3D map allows the vehicle’s processing hardware to form a picture of its environment and categorise objects, such as cars, bollards and pedestrians.

How it works

High-speed pulses of laser light are fired at a nearby surface – around 150,000 pulses per second. The sensor then measures how long it takes for them to bounce back, allowing the distance to the object to be determined. This calculation provides the 3D sensor with an accurate reading of the distance between the sensor and the target. By doing this again and again in quick succession, the unit creates a detailed picture of its surroundings, far quicker than the car itself is moving.

Where a camera-based vision system relies on two-dimensional images to be processed and interpreted, LiDAR sensors speed things up by making millions of measurements, in all directions, in real-time.

LiDAR resolution

LiDAR units offer different levels of resolution, just like cameras. To be effective vehicle sensors, they need to map the surrounding area in enough detail for the software to accurately make sense of the objects around it. The problem with ever-widening infrared beams is that while close-up resolution is usually excellent, objects further away are a little hazy. This is why LiDAR is often paired with additional sensors: cameras, radar, ultrasonic, etc.

The beams from a LiDAR unit bounce back after hitting surfaces and mapping 3D space, generating a point cloud – an intricate collection of points to be categorised and understood. The points are often read as polygons; their shapes being easier for a computer to process.

LiDAR limitations

LiDAR has one key limitation: it can’t see beyond solid objects. This is true for any system that relies on a signal travelling in a straight line. So if your vehicle prototype has only one LiDAR unit and a couple of bikers pull up alongside you, the sensor is blind to what’s behind them.

If the unit is obscured by anything in close range, you could lose a lot of data. Likewise, adverse weather conditions or clashing signals from another LiDAR unit could also interfere with the infrared signals. There are solutions to these challenges: this is something that leading developers claim to be able to overcome.

LiDAR in practice

To make a 3D map, you need a laser and something that detects the reflected light. The laser beam has to be pulsed and directed; there are several ways of doing this.

- Mechanically scanned LiDAR units are considered the conventional way of doing this, a moving mirror directs the beam to create a field of vision around the car.

- MEMS-mirror LiDAR uses micro-electromechanical mirrors to do the same thing on a much smaller scale.

- Optical-phased array LiDAR (aka. solid-state LiDAR) uses adjustable optical emitters in a phased array to direct the light beams from the sensor, with no moving parts.

LiDAR units use a semiconductor diode laser – a more powerful variation of the ones you’d find in a laser printer. The light itself is infrared and invisible to the human eye. The further it needs to travel safely, the higher the wavelength it will use. Today’s self-driving car lasers use wavelengths of around 1,550 nanometers to scan up to 200 metres ahead.

Related reading: 100 Real-World Applications of LiDAR Technology.

LiDAR sensors in autonomous cars

An ongoing debate is whether LiDAR technology is essential to autonomous vehicles. Some car manufacturers, including Tesla, use only cameras and radar-based systems, which are cheaper and more established as mature technologies in the automotive industry. On one hand, it’s easier to find professionals used to working with these technologies, but on the other, they are missing out on the abundant 360° 3D data offered by a LiDAR sensor. Ultimately, the choice of sensor will depend on the application, range requirements and budget of the developers.

3D sensing will become more and more prevalent in future vehicles. While early technology was slow and cumbersome, accelerating computing speeds and smaller electronics mean it’s now much faster and can be more easily integrated with existing technology. With LiDAR technology playing such a meaningful role in the future of driverless cars, manufacturers are under growing pressure to ensure they choose the right sensor to meet their needs.

Discover the ideal LiDAR solution for your application, contact us today.

Specialist LiDAR training

With so many superb products available it can be a challenge to work out the best solution for your application. Level Five Supplies is delivering specialist LiDAR training courses that will give you an insight and understanding of the technology.

Our one- and two-day training courses offer the ideal combination of theory and practice, ensuring you develop a sound working knowledge of LiDAR hardware and software. You will be able to generate, record and interpret point clouds for robotics, surveying and autonomous systems with this exclusive opportunity to understand the fundamentals of digital 3D LiDAR.

Find out more about the Introductory and Integrators courses.