Autonomous Cars 101: What Sensors Are Used in Autonomous Vehicles?

As autonomous vehicles become closer to reality, a greater number of startups, universities, car manufacturers and technology companies are investing in the development of automated driving – with no sign of slowing down.

If you’re heading down the road (pardon the pun) to autonomy, it can be hard to know where to start. If you’re just beginning to learn about autonomous technology, this introductory roundup is for you.

Why do autonomous cars need so many sensors?

Imagine trying to drive down the road with a completely frosted over windscreen. It would be a matter of seconds before you hit something or ran off the road.

Autonomous vehicles are no different. They must be able to ‘see’ their environment in order to know where they can and cannot drive, detect other vehicles on the road, stop for pedestrians, and handle any unexpected circumstances they may encounter.

Each type of sensor has its own strengths and weaknesses in terms of range, detection capabilities, and reliability. A host of technologies is required to provide the redundancy needed to sense the environment safely. When you bring together two heterogeneous sensors, such as camera and radar, this is called sensor fusion.

Autonomous vehicle sensor categories

Automotive sensors fall into two categories: active and passive sensors.

Active sensors send out energy in the form of a wave and look for objects based upon the information that comes back. One example is radar, which emits radio waves that are returned by reflective objects in the path of the beam.

Passive sensors simply take in information from the environment without emitting a wave, such as a camera.

Cameras

Cameras are already commonplace in modern cars. Since 2018, all new vehicles in the US are required to fit reversing cameras as standard. Any car with a lane departure warning system (LDW) will use a front-facing camera to detect painted markings on the road.

Autonomous vehicles are no different. Almost all development vehicles today feature some sort of visible light camera for detecting road markings – many feature multiple or panoramic cameras for building a 360-degree view of the vehicle’s environment.

Cameras are very good at detecting and recognizing objects, so the image data they produce can be fed to AI-based algorithms for object classification.

Some companies, such as Intel Mobileye, rely on cameras for almost all of their sensing. However, they are not without their drawbacks. Just like your own eyes, visible light cameras have limited capabilities in conditions of low visibility. Additionally, using multiple cameras generates a lot of video data to process, which requires substantial computing hardware.

Beyond visible light cameras, there are also infrared cameras, which offer superior performance in darkness and additional sensing capabilities.

Radar

As with cameras, many ordinary cars already have radar sensors as part of their driver assistance systems – adaptive cruise control, for example.

Automotive radar is typically found in two varieties: 77GHz and 24Ghz. 79GHz radar will be offered soon on passenger cars. 24GHz radar is used for short-range applications, while 77GHz sensors are used for long-range sensing.

Radar works best at detecting objects made of metal. It has a limited ability to classify objects, but it can accurately tell you the distance to a detected object. However, unexpected metal objects at the side of the road, such as a dented guard rail, can provide unexpected returns for development engineers to deal with.

LiDAR

LiDAR (Light Detection and Ranging) is one of the most hyped sensor technologies in autonomous vehicles and has been used since the early days of self-driving car development. A hugely versatile technology, it is increasingly being used in a wide range of applications.

LiDAR systems emit laser beams at eye-safe levels. The beams hit objects in the environment and bounce back to a photodetector. The beams returned are brought together as a point cloud, creating a three-dimensional image of the environment.

This is highly valuable information as it allows the vehicle to sense everything in its environment, be it vehicles, buildings, pedestrians or animals. Hence why so many development vehicles feature a large 360-degree rotating LiDAR sensor on the roof, providing a complete view of their surroundings.

While LiDAR is a powerful sensor, it’s also the most expensive sensor in use. Some of the high-end sensors run into thousands of dollars per unit. However, there are many researchers and startups working on new LiDAR technologies, including solid-state sensors, which are considerably less expensive, such as Ouster and Luminar.

Ultrasonic sensors

Ultrasonic sensors have been commonplace in cars since the 1990s for use as parking sensors, and are very inexpensive. Their range can be limited to just a few metres in most applications, but they are ideal for providing additional sensing capabilities to support low-speed use cases.

The sensors discussed above aren’t the only source of information for a self-driving car to know where it is and where to go. Other source inputs include Inertial Measurement Units (IMUs), GPS, Vehicle-to-Everything (V2X) communication, and high definition maps.

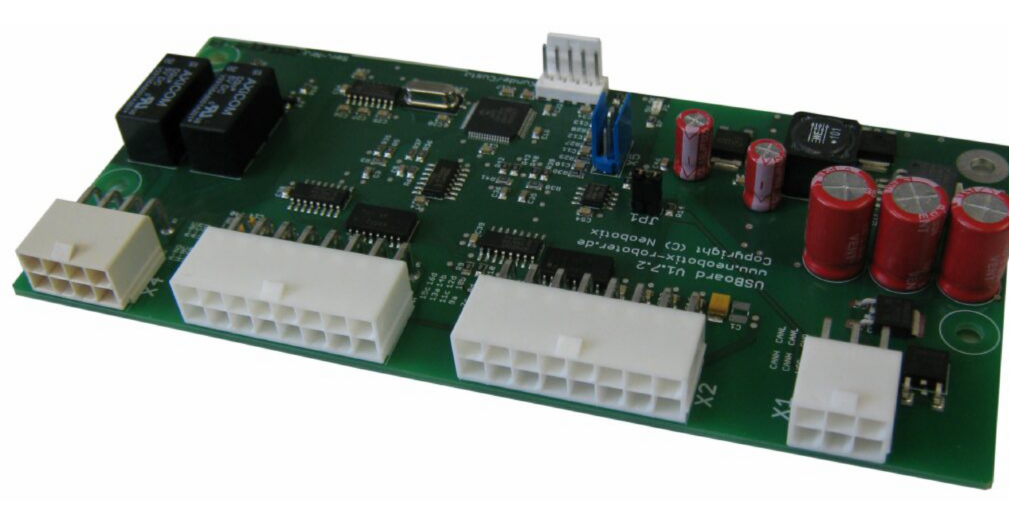

Conventional automotive ultrasonic sensors provide an analogue output voltage, which is correlated to the distance from an object. While these are low cost, additional hardware is needed (such as the Neobotix sensing kit) to interpret this data and make it suitable for an external computer-controlled autonomy solution.

German manufacturer Toposens has further developed ultrasonic sensors to provide a point-cloud-like output, at a much higher resolution. Their approach provides much greater levels of accuracy and object information as a result.

GNSS

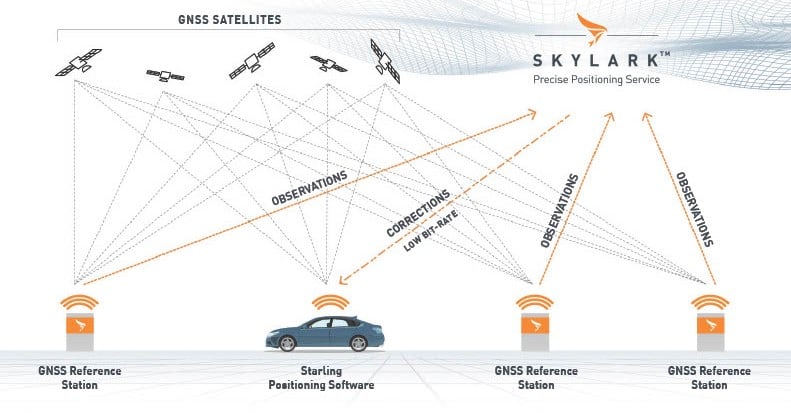

Global navigation satellite systems use triangulation to determine the position of a receiver in three-dimensional space, calculating the distance between the vehicle and multiple satellites in geostationary orbit, which are transmitting a time signal. Between 5 and 15 satellites are used, however, these signals are weak and can be changed by interference in the Earth’s atmosphere, can bounce off buildings (the urban canyon effect), and are easily interfered with (spoofing).

The main methods used to overcome these are ‘RTK’ or real-time kinematics, a time signal transmitted by the local cellular network, and the use of an IMU or Inertial Measurement Unit. How these signals are combined will determine the quality of the resulting location information. These advanced grade systems can achieve a positional accuracy of around 1cm.

While often called GPS, this is shorthand for Global Positioning System, a network of satellites run by the US Government. China (BAIDU), Europe (GALILEO) and Russia (GLONASS) are also used, so make sure the GNSS system you want to use can receive the best signals for your geographic area.

Other sensors

Sensors within the electro-mechanical systems in a vehicle are also valuable, this might include angle and torque sensors on the steering column, wheel odometry, and feedback on rotation via the braking system.

Bringing it all together

All of these sensors output different types of data – and lots of it. This requires a considerable computing platform to fuse the data together and create a consolidated view of the vehicle’s environment. Launched in 2019, Level Five Supplies is a comprehensive supplier of autonomous vehicle hardware and technology.